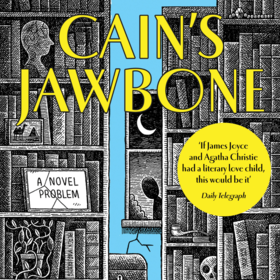

Cain’s Jawbone Murder Mystery

$300 USD

Completed (over 3 years ago)

Prediction

263 joined

39 active

Start

Dec 15, 22

Close

Dec 31, 22

Reveal

Dec 31, 22

Interests sake

Connect · 28 Dec 2022, 07:02 · 7

Has anyone tried solving the problem "manually" by reading the story and trying to put it together "old school"?

The challenge is to try build a model but maybe a human brain is better than a computer brain...

Haven't tried, it seems to be very time consuming.

@amyflorida626 so is "manually" solving (or probing) a valid way to compete? We see that LB has exploded with "good" scores because part of the answers were released on the forum. Are probing/manual labeling approaches valid as final solution or it is necessary to contribute pure NLP solution?

It is real tricky ... I've been grepping the text a lot. It does help, you can tweak the model to be better, but it is not pure ML then.

@NikitaChurkin probably the solution is not pure, but, I think, the probe are known publicly and is a small portion of the total solution. You can use that to calibrate your ML a bit. Even in practise it would be foolish to ignore such bits of info, and not to use them to add to the model.

You can use them implicitly, but is it allowed to label answers (any, not only the right ones) by hand? Something like sample_sub.iat[19, 90] = 7 in your code? I believe LB scores > 0.1 are not achievable without such direct labeling of the right answers.

Well ... I'll discuss a bit more freely once the comp closes. The text is very cryptic but does contain a few clues that I think you can and should use to manually calibrate the optimiser. Same with probes.

Anyhow, you know probes. Why not load that into your solution and see what happens to your score.

Perhaps it is like a chess engine. You can have a strong engine, but you still will load the moves of well known openings and endings to prevent the ML from disaster.

@amyflorida626 any comments / guidance?

Hi Skaak and Nikita,

This challenge was a bit weird and not clarified in the rules, I am sorry.

This was a more anything-goes kind of challenge. Preferably not probing but it was okay. As it has only been solved 4 times manually the publishing company was interested to see if ML could do something better.

I think probing helped as it gave you a base but you wouldn't have gotten far with probing and the submission limit.

There were 75! possibilities and 39 participants x 200 submissions each. Even if you all worked together there was only a 1e-106 chance of you hitting the nail.