Lacuna - Correct Field Detection Challenge

Helping East Africa

$10 000 USD

Completed (almost 5 years ago)

Prediction

Earth Observation

634 joined

110 active

Start

Mar 26, 21

Close

Jul 04, 21

Reveal

Jul 04, 21

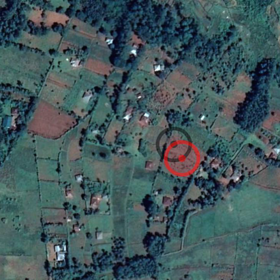

Image collisions

Help · 1 Apr 2021, 18:41 · edited ~1 hour later · 4

Dataset contains such cases as coordinates of several fields for one image:

'id_6b5e6f60', 'id_6ff2c679', 'id_cd8562ff', 'id_ee39a2cb', 'id_f082b348'

is 5 different fields with coordinates on the same image.

How should we guess for which particular field on the image we should determine the coordinates?

For example: list of fields from one image from TEST set:

'id_6594c18d', 'id_65864854', 'id_0de7e770', 'id_c3ab5c47', 'id_9fa0ccaf', 'id_7fc14be1', 'id_a3da540a', 'id_9d0a92a8', 'id_a45ced03', 'id_6750b10d', 'id_91f92588', 'id_7b485411', 'id_b7952dd0', 'id_96145c71', 'id_b78fdbef', 'id_89f6c269', 'id_11a52bf5', 'id_89184e52', 'id_35a40737', 'id_16931b7c', 'id_543afbf2', 'id_69434f11'

Which is also concerning is that the train_Data seems to be inaccurate. It may be helpful to eliminate all data that is plagued with missing values, but removal only works well if the percentage of missing values is low. And a lot seems inaccurate. Do the organisers of this challenge have access to the true data to judge the submissions?

For train_Data index 4-7, for instance references the same planet images, and has the same training data with quality shown as good(3) and offsets as xy(0,0). If the id's are meant to represent seperate field id's then the yields and sizes should differ somewhat. If no better training data exists, then it will become garbage-in-out. And if the submissions are marked without true values (based on similar data to the train_Data) then ML won't help much.

ID Year PlotSize_acres Yield Quality x y

4 id_a1ce519e 2017 1.5 0.26875 3 0 0

5 id_66ea43d6 2017 1.5 0.26875 3 0 0

6 id_14155539 2017 1.5 0.26875 3 0 0

7 id_dc0031fe 2017 1.5 0.26875 3 0 0

Hi Avoronov and thenus,

Thank you for pointing that out. There are two different types of duplicates:

- Same location with different sizes (PlotSize_acres), these should be treated as independent points.

- Same location and same size, these are errorneous and should be dropped.

We are working on it and we will fix this issue and update you soon.

Hi kareem,

From what I understand in this conversation, the available data is not the final one and you need to update it ... it's right?

Approximately when do you plan to upload the new data?

Hi @kareem,

Can you let us know by when will this be corrected?