Lacuna - Correct Field Detection Challenge

Why are we evaluating the models on data points with wrong annotation (bad quality) ?

This is KarimAmer's response

== What about the leaderbord test data ? At least testing has guaranteed correct annotation, right ?

-KarimAmer- The annotation process is the same for both train and test data.

== Thanks for clarifying, but is that the right evaluation ? I agree that we should utilize all kind of data points with different qualities in training, but one would expect testing to have good quality annotation, even for your own use, is a model that gives you the lowest MAE on a test set with (1-2-3) quality points usefull ? shouldn't your evaluation be according to accurately annotated data ?

-KarimAmer- Sorry for the late reply. We are evaluating on all the quality levels because all of them happen in real life and we are interested in solutions that address them.

== Thanks for responding, I still think that is a wrong reason to use all qualities for evaluation, it is this simple, yes all quality levels happen in real life, some of them (maybe 1/2) have mistakes, we won't throw those parts we would like to utilise that data to produce our models (in training), but the evaluation should be only on level 3 quality (you learn from all qualities but expect a good model to produce annotations as close to perfect/correct as possible. Please explain if I give you a model that is able to produce the same patterns of mistakes seen in training on the test set, how is that usefull to your use case ? how is that usefull in any case ?

Hi @who_told_u_that

These samples aren't "wrong", they just have higher labeling noise (relative to others) and as I said before they are important to us and we want to have a model for them as well as the other samples. So, the answer to your last question is yes, it would be useful to have a model that match the expert human prediction as it is the upper cieling solution anyways.

Hi, thanks for taking the time

I wouldn't say this is noise

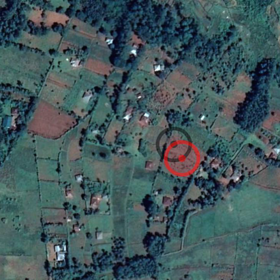

"The errors in the GPS coordinates are due to recording on the edges of the field rather than the center, or in the house which owns the farm, or under the shade of a nearby tree, or even on the main road leading to the farm."

And regarding this "as it is the upper cieling solution anyways", No the upper cieling are the correctly annotated points where the annotator is confident of the center point and isn't gessing from a nearby tree or the main road "and the annotator has to make a best guess based on size and proximity of the field. This is captured by the Quality variable in the data".

I raised an issue that I believe is important 3 weeks ago, which is using points that aren't even guaranteed to be within the plot limits for evaluation, But it's ok if you don't think it is a problem and in the end it's your evaluation

I think you misunderstood my point about the ceiling. I wasn't comparing high and low quality points. I meant that annotations made by the experts on all the points are the upper ceiling.

Let me highlight that expert annotators take into consedration several exciplict factors as described in the annotation guide post and also some implicit geographical and agricultural factors from their knowldge of the area of interest (like farmers behviours and economic satuation) which minimizes the annotation errors overall (compared to visual inspection only). These implicit factors come from our partner CGIAR's solid knowledge and vast experience of farmers in the area from which we have the data. If we have a model that captures the way the annotators work, it will be useful I beleive.

I hope this explanation gives you more confidence about the annotation process.

What I would like to do in the future (maybe in the next version of the competition) is to capture the model's prediction confidence. That will give more insights and better results but it will need more data (hopefully we can get it in the future!).