Spatio-Temporal Beam-Level Traffic Forecasting Challenge by ITU

12 000 CHF

Completed (over 1 year ago)

Forecast

733 joined

171 active

Start

Jul 24, 24

Close

Oct 11, 24

Reveal

Oct 11, 24

Congrats to top-scorers! What models did you use? (Gradient boosting anyone?)

Notebooks · 12 Oct 2024, 08:23 · 17

Hi everyone!

It's been an exciting challenge to work on. Congrats to those who made it to the top!!

I'd be really interested to hear what kind of models you all used, and if you'd be willing to share some notebooks for others to learn from, now that it's over.

Personally, I used XGBoost gradient boosting, but with very limited success. In the end I switched to just averaging historical data and ensembling with a linear trend model, which improved my score by 0.02.

If anyone had more success with this kind of model, It'd be nice if you could share some details, like features used, hyperparams, direct vs autoregressive modeling.

In general, I think we'd all be interested to hear about the top-scoring apporaches!

Cheers,

Emil

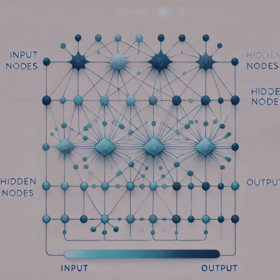

Hie, I used graph neural networks, just a simple STGCN with limited success

I just use a gradient boost model, and use bagging to optimize. I think the type time stamp is also important.

My solution was purely GBDT. An ensemble of a lightgbm(w0.4) model and a catboost model(w0.6). I will share the code once the evaluatioon has ended.

Hi Koleshjr,

Would you still consider sharing your solution?

You got a really impressive score and it would be super interesting to see! I used gradient boosting too, but obviously you did something much better.

Cheers, Emil

Hectic shake-ups :-(, are the current ranks from the private scores @Zindi @meganomaly. I know the reveal is in 11 days but seems the ranks updated.

when I see all rank and thier submission, it is not looks like private score rank it may be just random number, I hope so but it is final rank I will be >>>>>>>>>>>>>>>>>>>>>>>>>>>>>62sadly.

It looks like the final ranks as I have not received a mail for my code. I am waiting for the private score reveal so that I can look at my mistakes and submission scores. @Koleshjr have you received the mail from Zindi regarding code submission?

yes I did

Thanks for confirming

Congratulations to @koleshjr and top6 teams. I had funny learning time with high resolution data, I can't wait to see the top solutions. I don't think top solutions will be less than .200000mae.

Yeah, it looks like but I did except some teams in top 10 such as saak and you, we gonna learn something from top team top.

Yeah ... shake-up ... congrats @Koleshjr wonderful performance ... sorry @Krishna_Priya ... oh well ... for me was really nice to learn some post-transformer ts methods and was worth it for that alone. But it takes 1-2 days to fit those models, so mid-way I find myself fitting a model 1-2 days to get local CV, then, if that CV is good, fit again for 1-2 days on all data and then submit that final model, so 2-4 days for each single model! Too long, so I ended up not doing local CV, just fit model straight to full data and use LB as a type of CV. Big mistake, as I think even my simple dummy model outperfomed me here ... but at least I can blame zindi for not using a random public LB sample, but, I won't do it of course, because it is a bit like loosing the game and blaming the ref.

Was nice comp regardless, because data was really not adequate and so you had to do tricks like this. tbh, if I do this again, I'll probably again use the LB as CV given lack of proper data.

I had a nice good model towards the end, I was even hoping to climb even higher on the public LB and tried very hard, but @Krishna_Priya and @Koleshjr kept their spots and even improved towards the end - so yeah, congrats again. At least KP and me don't have to write down everything ... that would have been tricky ...

Well done to top scorers, indeed looks like not having part of the last week horizon in the PB test set was catastrophic for some of us - not sure how you can verify such a long horizon with the available data, still keen to see the private scores and some of the solutions.

Lol @skaak . Nice one. Actually i think i know my mistake . I forgot to remove 3 features from my final model before selecting submissions. Lag 168 values of all 3 KPIs. It should have values in 6th week but all nulls in 11th week, i knew this but somehow forgot about it 😅.

By the way just one small thing, group by station cell beam and hour and median of target can give you a score of 0.2007 on public . This was my second submission. No ML. It was funny to me that this was not caught by many people :)

lol that is really funny - yes here you could try some very trivial techniques like that and probably beat a lot of the advanced techniques.

I'd love a nice ts challenge where you can make progress with some of the nice more recent and more advanced techniques, now playing kaggle jane street a little bit, but that one is so random I think there also you can get a good score just by doing something trivial ...

So I hope for a nice challenge with loooooooong time series and maaaaany time series (like jane street, but less noise :-( to test these techniques.

Either that or more zindi computer vision challenges ...

Go for it KP, you did so well ... can't wait to hear how your trivial solution scored. Perhaps it was your best :-)

You know, what I did, was to combine my "trivial" model (the dummy one) with more advanced ones, so if I were you, I'd sub the average between that group-by model and a more advanced model. Here, especially given the uncertainty around week 11, I hoped such a strategy would be robust. But look at my shake-out, so I am not sure why it failed so dismally, but oh well ... life goes one ...

@Zindi will we get private scores on this?