Wadhwani AI Bollworm Counting Challenge

First of all, I would like to thank Zindi, Wadhwani AI, FAIR Forward, and GIZ for this great competition.

Congrats to all the winners!

Since overfitting strategy failed. I will talk about something I've done 2 months ago. BTW, I don't have new things. Just keep improving local CV MAE from the beginning till the end.

Solution summary:

TLDR

1. Data: I went through the data and at first I found it quite difficult to realize that there are many images containing worms but no labels. And my guess is that there are 3 classes in this competition. So I trained with all data including images with no labels. Remove some images with available boxes (don't have labels).

2. Cross-validation: 5 Folds GroupKfold

3. Training

- Models: yolov5x6 image size 2240, yolov5l6 image size 2560

- Batch size: 8

- Epochs: 40

- Optimizer: SGD + momentum

- Augmentations: Hflip + Vflip + Mosaic + Mixup

- Loss: CELoss

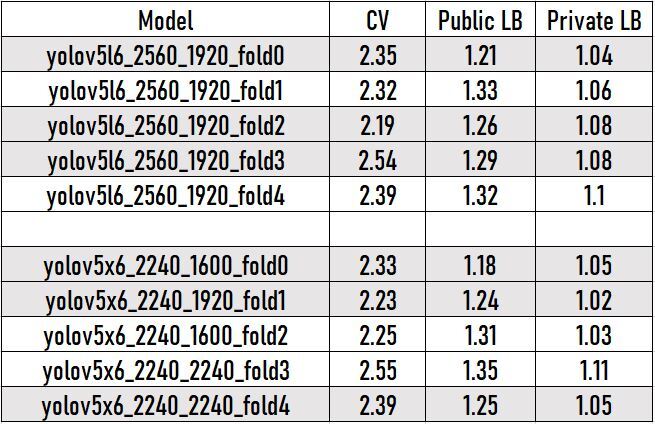

4. Performance of models

- I choose smaller size than training for inference because it improves both my CV and LB.

5. Models I choose:

- Harmonize between CV and Public LB

- Yolov5l6: Fold 0, 2, 3

- Yolov5x6: Fold 0, 1, 4

6. Ensemble multi-scale models:

I used WBF (Special thanks to @ZFTurbo) with different confidence thresholds (abw: 0.4 and pbw: 0.3). Thresholds I choose based on CV and LB score. Ensemble gives me 1.225 Public LB/ 0.999 Private LB

I have a sub that give me 1.25 Public LB/ 0.96 Private but I didn't choose because Public LB is higher than above.

7. Filter false positive worms

After inference, I observed that there are quite a few false positive images (the image has a lot of worms but only a few are detected). So I further trained a model that only detects worms and filters in such a way that if the number of worms detected is divided by the number of worms detected by the filter model is less than the threshold (in my experiments is 0.15) then I removed all the worms that are supposed to be false positives.

After using this approach: 1.221 Public LB/ 0.998 Private LB (It seems to be not improved too much on Private LB than I expected 😂).

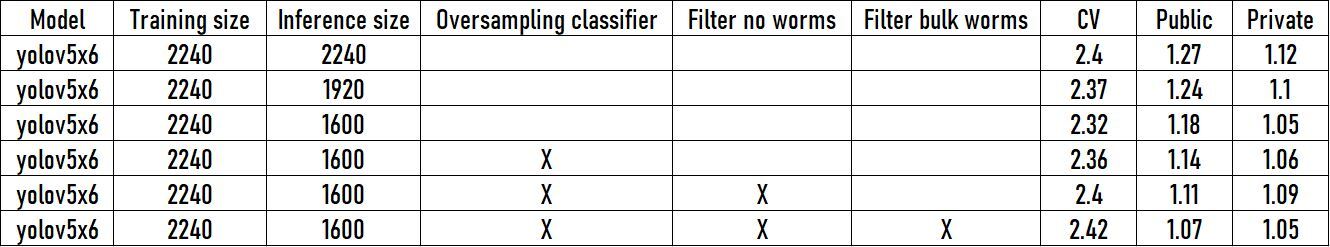

8. How to overfit Public LB 😃

- Oversampling: I crop out all boxes in labels and use oversampling for label ABW. Split 5 folds and training by convnext tiny, convnext small. After ensemble some models classification I achieved 1.14 on Public LB.

- Filter no worms: Mentioned in section 7.

- Filter bulk worms: As in section 7 but with images containing more than 80 pbw I use result of model only detect worms.

- After using all tricks I achieved 1.07 on Public LB (BTW @vityzy could achieve 1.03 on LB👏).

9. What didn't work

- Pseudo label: Try many times but did not improve my score.

- BCE Classification: Improve Public LB but not improve my local CV.

10. Thank you

- My first time participating in Zindi platform and luckily winning this competition. 😄

- Thanks everyone for sharing helpful discussions and notebooks.

Congratulations on 1st place and thanks for sharing - so much to learn from you.

Thank you!

Congratulation, your solution is quite cool, deserve it ! Look at your training option, really curious about your hardware.

Thanks, Congrats on your 5th place. Looking forward to seeing your solution.

BTW I used 4 x A100 for training.

Congratulation on the first place. You have shit load of compute😂.

what a shit load of computation :V no wonder why I cannot beat your public score. maybe with 4 A100 I could beat it :))

😅 A100 is all you need

Haha , Same here without A100 i would not have been able to get 2nd place too

Congratulations on winning the competition. I was wondering what tools you used to track the metrics & experiments. I personally find it quite awakard using wandb or similar services since I find many runs need to be interruped in middle of trainig due to bug or quick changes requires in code due to forgetting something, resulting in a mess. Thanks

Thanks, I used wandb to visualize loss, and track experiments.

Thanks! This is some new learning! And heartiest congratulations!

This is a powerful solution, optimised for robustness. Congratulations on wining 1st place, well deserved.

Thanks for recognition of @ZFTurbo.

Thank you. I'm looking forward to seeing your solution 😊

would you mind to share the code thank you and Congratulation