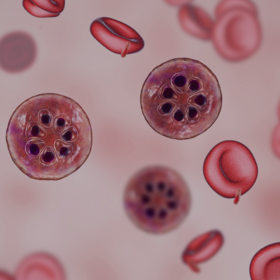

Lacuna Malaria Detection Challenge

Helping Uganda

$5 000 USD

Completed (over 1 year ago)

Object Detection

Computer Vision

1338 joined

368 active

Start

Aug 16, 24

Close

Nov 17, 24

Reveal

Nov 17, 24

Evaluation metric implementation

Help · 17 Oct 2024, 08:09 · 1

Hi @zindi! Can you please share evaluation metric - because right now I have strong assumption that metric is different from standard mAP metric and have some separate logic for NEG class - I have submitted 3 submits with different lower threshold levels for confidences (0.1 0.2 0.05) and scores differs a lot and if we follow standard COCO eval from pycocotools the difference is such small thresholds should not be so high.

Is this just what any package would do and you're giving the behind the scenes look at MAP.50? Or is this custom to run yourself if needed? How are NEG classes handled again? I tested... seems like confidence or box dims don't change the score - why?