MPEG-G Microbiome Classification Challenge

$5 000 USD

Completed (8 months ago)

Classification

Federated Learning

Python

Deep Learning

795 joined

83 active

Start

Jun 20, 25

Close

Sep 15, 25

Reveal

Sep 15, 25

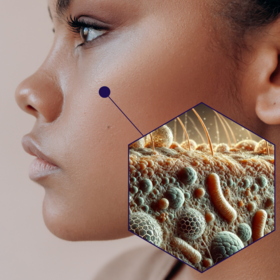

MPEG-G Microbiome Classification Pipeline

Data · 15 Sep 2025, 22:07 · 0

In this project, we worked with microbiome sequencing data stored in the MPEG-G format, which is a highly compressed genomic standard. The goal was to classify samples into four classes: Mouth, Skin, Nasal, and Stool. Here’s a summary of the ideas and the data processing workflow:

1. Handling raw sequences

- Each sample comes as a FASTQ file containing DNA reads.

- Reads are variable in length depending on class: Skin and Mouth: ~124–125 base pairs Nasal and Stool: ~400 base pairs

- This discrepancy introduces a strong bias if raw counts are used directly, because sequence length correlates with class.

2. Cleaning and deduplicating sequences

- Sequences are extracted from the FASTQ and stored as plain text.

- Duplicate sequences are counted to understand their abundance.

- Low-count sequences, which are likely sequencing errors, are merged into high-count sequences using Hamming distance thresholds: ≤3 for ~124–125 bp sequences ≤8 for ~400 bp sequences

- This denoising ensures that only biologically relevant variants remain while removing noise.

3. Sequence representation via k-mer encoding

- Instead of using raw sequences directly, each sequence is converted into k-mer features: Overlapping k-length substrings (k-mers) are extracted from sequences using a sliding window. Each k-mer is weighted by the abundance of the sequence it came from. Counts are normalized by the total number of k-mers per sample to prevent the model from using trivial signals like sequence length.

- For example, using 5-mers generates 4⁵ = 1024 features per sample, capturing the compositional patterns of the DNA reads.

4. Reducing data size without losing information

- By representing sequences together with their counts instead of keeping full raw FASTQ files, storage was reduced dramatically (from ~160 GB to ~897 MB) while preserving the ability to classify samples effectively.

- This demonstrates the power of compressed genomic representations for machine learning.

5. Federated learning setup

- To mimic real-world scenarios where different hospitals or labs hold different patients, samples were split into clients: Subjects are kept intact; all samples from the same individual remain on the same client. Each client contains roughly balanced classes to avoid extreme imbalance. Clients have similar numbers of samples to avoid domination by any single subject.

- The global model is trained collaboratively across clients, preserving data privacy while leveraging distributed information.

Discussion 0 answers